AI Stack Evolution: Scaling Agentic AI for Enterprise CX

Nutanix Targets Enterprise AI Scale with Integrated Agentic AI Stack

As enterprises accelerate their adoption of artificial intelligence, the focus is shifting from experimentation to operational scale. Nutanix’s latest announcement introduces a full-stack software solution aimed at addressing the complexities of deploying and managing agent-based AI systems in production environments.

The offering is designed to support what the company describes as “AI factories”—large-scale environments where organizations can build, deploy, and operate thousands of AI agents simultaneously. The move reflects a broader industry transition toward industrializing AI capabilities, particularly in customer-facing and operational domains.

From Intelligent Automation to Autonomous Systems

Customer experience strategies are evolving rapidly as AI becomes central to engagement models. Organizations are moving beyond rule-based automation toward systems capable of reasoning, decision-making, and executing multi-step processes independently.

This evolution is driven by rising customer expectations. Consumers increasingly demand real-time, personalized, and context-aware interactions across digital and physical channels. Meeting these expectations requires not only advanced AI models but also the infrastructure to support them at scale.

However, many enterprises face challenges in operationalizing AI. Fragmented environments, inconsistent data access, and high infrastructure costs can limit the ability to move from pilot projects to enterprise-wide deployment. These constraints are particularly evident in customer experience functions, where reliability, speed, and personalization are critical.

Positioning for the AI Infrastructure Layer

Nutanix’s approach reflects a strategic effort to position itself as a foundational layer for enterprise AI. Traditionally focused on hybrid multicloud infrastructure, the company is extending its capabilities to address the full lifecycle of AI deployment.

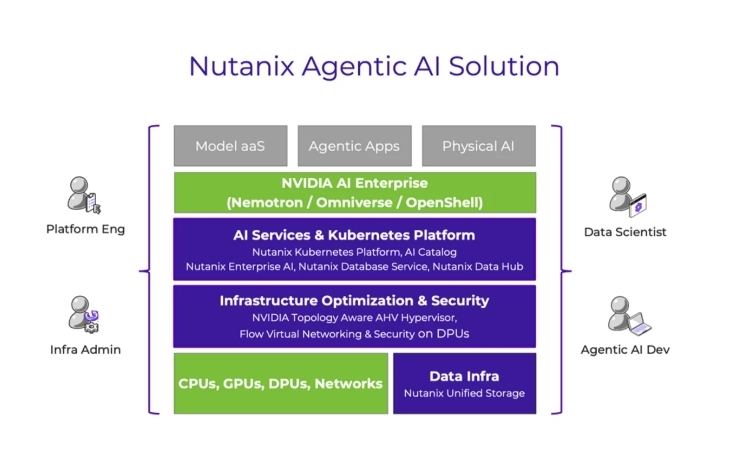

The solution integrates virtualization, networking, Kubernetes orchestration, and AI platform services into a unified stack. By doing so, Nutanix aims to simplify the management of complex AI environments while maintaining performance and security.

A key aspect of this strategy is the collaboration with NVIDIA, aligning Nutanix’s platform with widely adopted AI tools, models, and infrastructure standards. This ecosystem approach strengthens interoperability and allows enterprises to leverage existing investments while scaling AI initiatives.

As Thomas Cornely, Executive Vice President of Product Management at Nutanix, explains, production AI systems differ significantly from traditional workloads. They must handle thousands of concurrent agents, rapid changes, and continuous interaction across users and systems.

How the Technology Works

At a technical level, the solution combines infrastructure orchestration with platform-level AI services to create a cohesive operating environment for AI workloads.

The platform includes Kubernetes-based environments that support the deployment and scaling of AI applications. It also introduces Models-as-a-Service capabilities, allowing organizations to access, manage, and fine-tune AI models across cloud and on-premises environments.

An integrated AI gateway enables centralized policy control, helping enterprises enforce governance and compliance across both public and private AI models. This is particularly relevant for organizations operating in regulated industries or managing sensitive customer data.

Infrastructure-level enhancements further optimize performance. These include GPU-aware virtualization, advanced networking capabilities, and data services designed for high-throughput, low-latency operations. Together, these components aim to improve resource utilization while reducing operational complexity.

Industry analyst Steve McDowell notes that bringing together multiple layers of the AI stack—from infrastructure to models—can reduce friction and enable more coherent enterprise deployments.

Implications for Customer Experience

For customer experience leaders, the ability to deploy AI at scale has direct implications for service delivery and engagement strategies.

Agent-based systems can enable more dynamic and context-aware customer journeys. For example, AI agents can independently handle complex service requests, provide personalized recommendations, and orchestrate interactions across multiple channels in real time.

Scalable infrastructure also improves service reliability. By optimizing resource allocation and reducing latency, organizations can deliver faster, more consistent interactions. This is particularly important in high-volume environments such as contact centers, digital commerce platforms, and financial services.

Operational efficiency is another key benefit. Streamlined infrastructure management reduces the time and effort required to deploy new CX capabilities, allowing organizations to innovate more rapidly.

At the same time, enhanced security and governance features support trust. As AI systems interact more deeply with customer data, ensuring compliance and transparency becomes essential to maintaining strong customer relationships.

Broader Industry Implications

The introduction of integrated AI stacks reflects a broader shift toward platform-based approaches in enterprise technology. Organizations are increasingly looking for unified environments that combine infrastructure, data, and AI services.

This trend is reshaping the competitive landscape. Technology providers are moving beyond individual solutions to deliver end-to-end platforms that simplify deployment and management. Partnerships between software vendors and hardware providers are becoming central to this evolution.

Cost management is also emerging as a critical factor. As AI adoption scales, organizations are focusing on optimizing resource usage and controlling operational expenses. Solutions that offer predictable cost structures and efficient performance are likely to gain traction.

Looking Ahead: The Future of AI-Driven CX

The transition to agent-based AI represents a significant milestone in the evolution of customer experience. As systems become more autonomous, organizations will be able to deliver more proactive, personalized, and efficient interactions.

However, achieving this vision requires more than advanced algorithms. It depends on the ability to operationalize AI at scale, supported by infrastructure that ensures performance, security, and governance.

Nutanix’s latest announcement highlights the growing importance of this foundation. By focusing on the operational challenges of AI deployment, the company is addressing a key barrier to enterprise adoption.

For CX leaders, the message is clear: the next phase of digital transformation will be defined not just by innovation, but by execution. Organizations that can effectively scale and manage AI will be better positioned to deliver consistent, high-quality customer experiences in an increasingly competitive landscape.

Key Takeaways:

• Enterprises are shifting from AI experimentation to large-scale deployment

• Infrastructure complexity is a primary barrier to scaling AI

• Agentic AI enables more autonomous and adaptive customer interactions

• Integrated AI stacks are emerging as a preferred enterprise model

• Cost, governance, and performance are critical to sustainable CX innovation

The post AI Stack Evolution: Scaling Agentic AI for Enterprise CX appeared first on CX Quest.

You May Also Like

TransFi Secures Pivotal $19.2M Funding to Revolutionize Global Stablecoin Payments

Wormhole launches reserve tying protocol revenue to token